You ship an AI agent . Your offline benchmark scores look solid — the model answers evaluation questions at 87% accuracy. Then in production: it calls the wrong tool, passes malformed arguments, loops through five unnecessary steps to answer a question that should take two, and ultimately fails to resolve the user’s intent even though its final text output sounds plausible.

That gap between benchmark score and production behavior is the central problem in agent evaluation today. Traditional accuracy metrics were designed for a world where an LLM receives one input and returns one output. Agents plan, select tools, execute multi-step trajectories, and adapt mid-run. Measuring them like static models means you’re flying blind.

This guide covers the three-layer evaluation framework engineers are converging on, the four agent evaluation metrics that map to real failure modes, and how Langfuse, Comet Opik, and LangSmith integrate these metrics into CI/CD pipelines.

Why Accuracy Benchmarks Fail for Agentic Systems

MMLU — the 57-task benchmark spanning mathematics, history, law, and more — was designed to evaluate whether a model can answer multiple-choice questions correctly [6]. It tells you almost nothing about whether an agent will reliably complete a multi-step workflow in production.

Anthropic has documented several structural problems with popular benchmarks: data contamination from training set overlap, ambiguous labeling, and saturation effects as frontier models approach ceiling scores [6]. These problems compound when applied to agent tasks.

The deeper issue is architectural. LangSmith’s evaluation documentation states it directly: agents require evaluation of “correct tool selection and proper argument formatting or trajectory” — fundamentally different criteria from text-quality metrics [5]. An agent can produce a perfectly fluent answer via entirely wrong tool calls. A static accuracy benchmark misses this failure entirely; it scores the output, not the path that produced it.

The Three-Layer Evaluation Framework

The practitioner community has converged on a three-layer model that mirrors how agents actually operate.

Layer 1 — Reasoning quality evaluates the agent’s internal chain-of-thought and planning before any action is taken [5]. Is the agent’s task decomposition coherent? Is it planning to use appropriate tools in a logical sequence? Failures here cause cascading errors — an agent that misunderstands the task structure will make systematically wrong tool choices even when each individual tool works correctly.

Layer 2 — Action correctness evaluates tool call accuracy: was the right tool chosen, and were arguments valid and correctly formatted [4]? This layer is distinct from output quality. An agent can hallucinate a plausible answer without calling any tool, or call a search tool when it should have called a database query. Both are Layer 2 failures invisible to output-level scoring.

Layer 3 — End-to-end execution success measures whether the agent completed the goal [5]. This encompasses task adherence, step efficiency, and intent resolution. A common failure mode: agents that technically complete the specified task but miss the user’s underlying intent — especially in multi-turn conversations.

Hamel Husain’s practitioner post on evals describes a similar progression: starting with unit test assertions on every commit, layering LLM-as-a-judge evaluation on production traces, and eventually A/B testing for major releases [7].

The Four Agent Evaluation Metrics Engineers Actually Use

Task adherence answers: did the agent complete the stated goal? This ranges from binary pass/fail to gradient scoring where partial completion is distinguished from total failure [5]. A customer support agent that resolves 70% of a multi-step issue is different from one that abandons the task entirely.

Tool call accuracy scores whether the agent selected the correct tool and passed valid arguments, computed per-step in the trajectory [5]. A five-step agent that gets steps 1–4 right but fails at step 5 has 80% tool call accuracy — information you lose entirely if you only evaluate the final output.

Intent resolution asks whether the output satisfied the user’s original intent, requiring semantic scoring rather than exact-match [1]. A user asking for “the fastest route to the airport” who receives technically accurate bus directions when they meant driving directions has an unresolved intent — even if the task specification was followed literally. This is where LLM-as-a-judge earns its place.

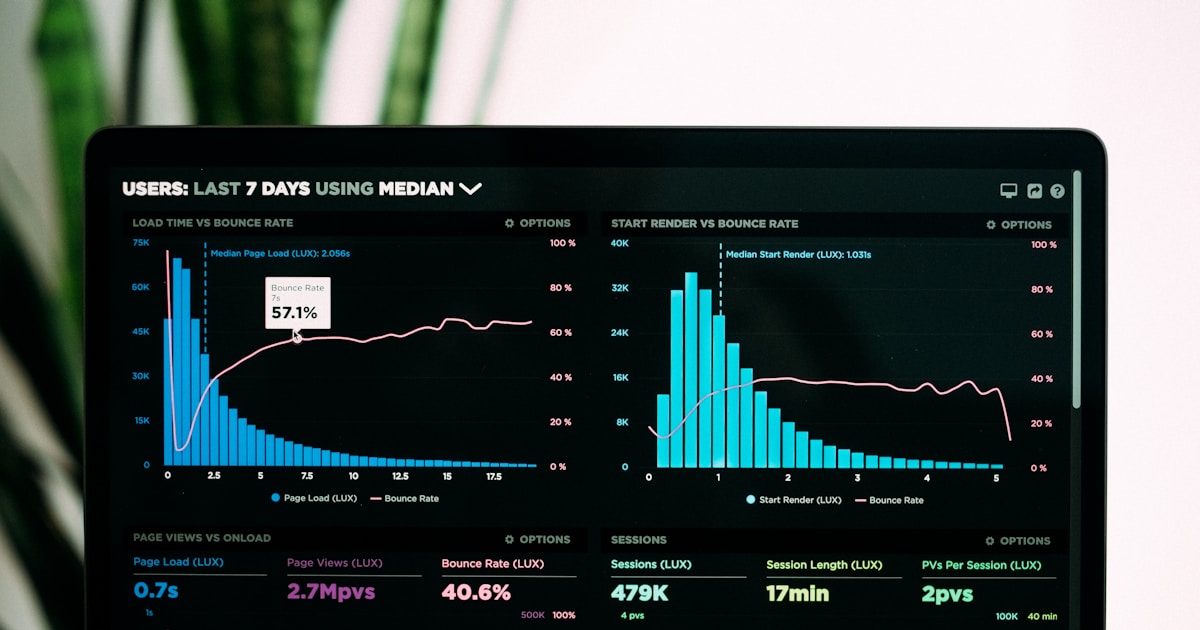

Step efficiency measures the ratio of steps taken versus the minimum viable path [7]. Bloated trajectories — agents taking eight steps for what should take three — signal prompt or planning failures. This metric degrades silently: your agent may produce correct outputs while becoming progressively more expensive and harder to debug.

Offline vs. Online Evaluation: When to Use Each

Offline evaluation happens pre-deployment against fixed datasets, with regression testing as the goal [5]. Run your agent against a curated case set with known expected behaviors and gate deployment on whether scores hold. Langfuse formalizes this as the “experiments” phase with concurrent execution, auto-tracing, and error isolation built into the SDK [1]. LangSmith describes the same lifecycle: offline benchmarking, regression testing, unit testing, and backtesting before any deploy [5].

Online evaluation runs continuously against live production traces [2]. The goal is monitoring: detect anomalies, score the long tail of production traffic, surface new failure modes your offline suite didn’t anticipate. Comet Opik’s “Online Evaluation Rules” let teams define LLM-as-a-judge metrics that automatically score all production traces or a sampled subset at configurable rates up to 100% [2].

The feedback loop between both phases is what makes evaluation compound. Langfuse’s documented workflow shows the pattern: prompt change → offline experiment → score review → deploy → live observation scoring → dataset expansion from production edge cases [1]. Each production failure curated into the offline dataset makes future pre-deploy testing more complete.

LLM-as-a-Judge at Scale: How Teams Score Thousands of Agent Runs

Human review doesn’t scale to production volumes. LLM-as-a-judge has become the standard for scalable evaluation.

Langfuse’s implementation uses rubric-guided scoring: a judge model receives the agent’s input, output, evaluation rubric, and optionally ground-truth. It returns a numeric score plus chain-of-thought reasoning [1]. The chain-of-thought matters — it makes evaluation failures debuggable rather than opaque.

Comet Opik ships built-in LLM-as-a-judge templates including Hallucination detection and Answer Relevance, with support for fully custom judge prompts using mustache-style variable binding to trace fields [2]. The hallucination metric is grounded in the tool outputs and retrieved context the agent had access to — the right framing for agents, since agentic hallucination means stating something not supported by what the tools actually returned.

For production sampling, the practical pattern is tiered: score 100% of traces for cheap heuristic checks (latency, tool call counts, error rates), sample a smaller percentage for LLM-as-a-judge scoring of intent resolution and task adherence, and escalate cases for human review based on score thresholds or user feedback.

CI/CD Integration Patterns for Agent Evaluation

Comet Opik provides native Pytest integration via the @llm_unit decorator. LLM unit tests run as standard CI checks, and Opik tracks pass/fail rates per test to the platform, creating an experiment record per CI run [3]. Evaluation history is automatically versioned alongside code deploys — a key capability for diagnosing when a specific change degraded behavior. For teams building agent optimizer workflows, Opik’s automated prompt tuning integrates with the same experiment infrastructure [8].

Langfuse supports running experiments programmatically through Python and JS/TS SDKs, with custom scoring functions that can gate deployments on score thresholds [1]. The SDK-first design means integration into any CI environment — GitHub Actions, GitLab CI, internal Jenkins pipelines — without platform-specific plugins.

LangSmith positions its offline evaluation suite directly around CI: “regression testing: ensure new versions don’t degrade quality” and “unit testing: verify correctness of individual components” [5]. For teams in the LangChain ecosystem, evaluations can run from the same testing layer as other application tests.

Rechat’s “Lucy” real estate assistant is a documented example of what evaluation-less development produces: Lucy hit a quality plateau that engineering intuition couldn’t diagnose. The fix required building systematic eval from the ground up — starting with tool-call assertions, then layering LLM-as-a-judge scoring on top [7]. Eval debt is real; CI gates built early are cheaper than retrofitting them after production quality degrades.

Tool Landscape Comparison: Langfuse, Opik, LangSmith

Langfuse (approximately 23,000 GitHub stars) is open-source and self-hostable, which matters for data residency requirements [1]. (Note: Langfuse was acquired by ClickHouse in January 2026. Self-hosting and open-source access remain available as of this writing.) Its strongest differentiator is the experiment runner and the tight feedback loop between offline experiments and online production scoring. LLM-as-a-judge with chain-of-thought output is well-documented with detailed rubric design guidance.

Comet Opik stands out on CI/CD ergonomics: the Pytest @llm_unit integration offers a direct path from evaluation to deployment gate [3]. Its Online Evaluation Rules are easy to configure, and it ships the broadest built-in metric template library [2]. Gap: tool-call-level trajectory evaluation is not native and requires custom judge prompts.

LangSmith has the deepest integration with LangChain/LangGraph agent patterns and the most complete offline evaluation lifecycle taxonomy [5]. The trade-off is ecosystem coupling — it works best when building on LangChain primitives. Teams running custom agent frameworks may find Langfuse or Opik more portable.

None of the three natively scores agent reasoning quality (Layer 1) without custom configuration. Evaluating intermediate chain-of-thought quality remains an open gap requiring domain-specific rubrics.

Building Your Agent Eval Stack: A Practical Starting Point

Phase 1 — Unit tests on tool calls. Write deterministic assertions on tool call validity before adding any LLM judge. Did the agent call the search tool with a non-empty query? Did it pass a valid date format to the calendar API? These heuristic checks are cheap, fast, and catch a large class of regressions. Opik’s @llm_unit pytest pattern formalizes this cleanly [3].

Phase 2 — LLM-as-a-judge for intent resolution. Once heuristic coverage is stable, add a judge layer for semantic metrics. Build a rubric that reflects your specific agent’s goals, sample from production traces, and start growing an evaluation dataset [1][2].

Phase 3 — Online evaluation with production feedback loops. Configure online evaluation rules to score live traffic at a meaningful sampling rate [2]. Alert on score threshold regressions. Curate production failures into your offline dataset. This closes the loop — evaluation becomes a compounding asset rather than a one-time effort.

The field is converging on this two-phase model — offline regression testing combined with live production scoring — as the practical baseline for production agent quality [1][5]. Teams that skip it are operating on intuition; teams that implement it have a systematic way to know when their agent improves, degrades, or fails in ways their test suite didn’t anticipate.

What Comes Next for Agent Evaluation

The current tooling solves the measurement problem well enough to act on. What it doesn’t yet solve is automated root-cause attribution: knowing a task adherence score dropped 8% is useful; knowing whether that drop came from a planning failure, a tool selection error, or a prompt regression is the next frontier.

Expect the gap between online monitoring and offline debugging to close over the next 12–18 months as evaluation platforms add automated regression bisection and richer trajectory diffing. For now, teams that build structured eval into their development loop today — even with simple heuristics and a single LLM judge — will be positioned to benefit from those improvements as they arrive. The foundation you build now is the infrastructure you’ll iterate on.

Sources

- [1] Langfuse. Evaluation of LLM Applications — Overview, LLM-as-a-Judge Guide, Experiments via SDK. https://langfuse.com/docs/evaluation/overview (2026-03-06)

- [2] Comet / Opik. Online Evaluation Rules — Opik Documentation. https://www.comet.com/docs/opik/production/rules (2026-03-06)

- [3] Comet / Opik. Pytest Integration — Opik Documentation. https://www.comet.com/docs/opik/testing/pytest_integration (2026-03-06)

- [4] Comet / Opik. Evaluation Metrics Overview — Opik. https://www.comet.com/docs/opik/evaluation/metrics/overview (2026-03-06)

- [5] LangChain / LangSmith. Evaluation Concepts — LangSmith Docs. https://docs.smith.langchain.com/evaluation (2026-03-06)

- [6] Anthropic. Challenges in Evaluating AI Systems. https://www.anthropic.com/research/evaluating-ai-systems (2023)

- [7] Hamel Husain. Your AI Product Needs Evals. https://hamel.dev/blog/posts/evals/ (2024-03)

- [8] Comet. Automate Prompt & Agent Tuning with Opik’s Agent Optimizer. https://www.comet.com/site/blog/automated-prompt-engineering/ (2025)